Storage

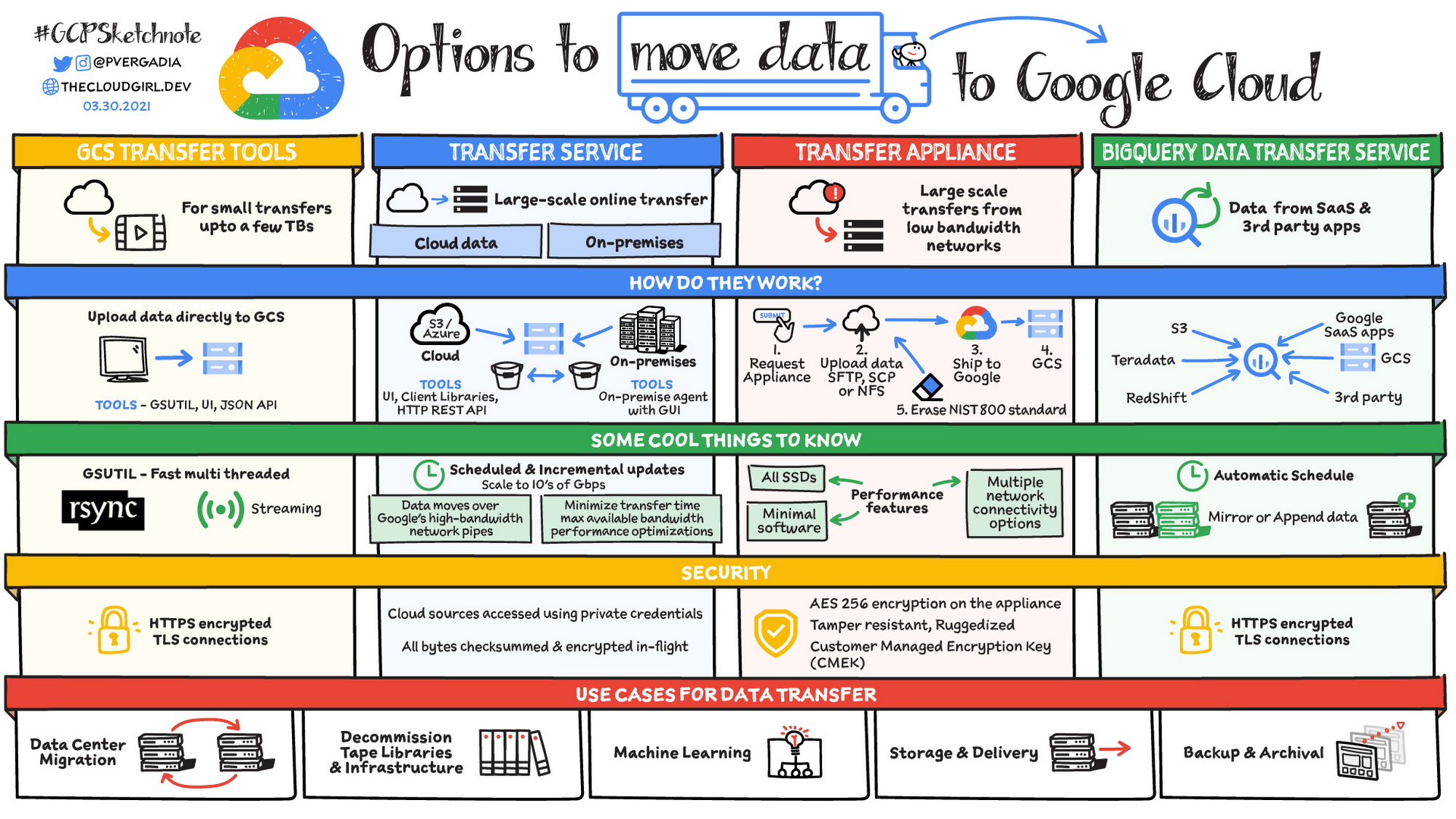

- Data Transfer Appliance: Ingest only, 100TB or 480TB high-capacity rackable storage server to physically ship data. It is recommended for data that exceeds 10 TB or would take more than a week to upload.

- Data Rehydration is required to access and use the transferred data. The data is uploaded to a staging GCS bucket compressed, deduplicated and encrypted. Data rehydration reverses this process and restores the data to a usable state.

- Storage Transfer Service | cloud: Source can be S3, Azure Blob Storage, HTTP/HTTPS endpoint, or another GCS bucket, set up scheduled or one-time transfers. You pay for its actions.

- BigQuery Data Transfer Service is different, use

bqcommands or API. This automates data movement into BigQuery on a scheduled, managed basis. Can also support copying BQ datasets from one region to another.

- BigQuery Data Transfer Service is different, use

- Also different from this Transfer Service | on-premises, which is used for >1TB of on-premises data.

- An agent is installed on-prem that runs inside a Docker container, you need at least one agent per project and the workload is distributed across agents

- The Transfer Service uses Cloud Pub/Sub to communicate with the agents

- Always have the

gsutilscripts and browser-based tools. Used when data is under 1TB.- Common

gsutilcommands are similar to Linux file management: Make Bucket:mb, Copy:cp, Move:mv, Remove:rm

- Common

- Another helpful table for deciding when to use which one

- Cloud Filestore – Fully-managed file-based storage system, multiple VMs can write to it, it’s a network attached storage (NAS) solution

- Performance is consistent

- Accessible to GCE and GKE through a VPC via NFSv3 protocol, not compatible with Cloud Functions or App Engine

- Used to need to combine with a backup system, but Google Cloud now has a built-in backup tool

- Provisioned in TB in standard or premium tier (up to 64TB ea.), you pay for the provisioned amount, unlike GCS where you only pay for what you use

- Premium SKU offers higher throughput and IOPS

- Cloud Storage (GCS) is an infinitely scalable, fully-managed, versioned, and highly-durable object storage. This service is important and could use its own section.

- You create buckets, and objects go in buckets

- URLs are predictable, kind of like a file system, but it’s NOT a file system and this can cause some interesting issues depending on your use case

- Accessed through a RESTful JSON or XML API, JSON is preferred because XML doesn’t support everything yet

- Integrated site hosting and CDN functionality

- You only pay for what you use

- You can control access at a uniform level with IAM (bucket or key prefix) or at object level with ACLs (mostly for interoperability with S3, no audit logs)

- Signed Policy Documents specify what can be uploaded to a bucket, this offers control over size, content type, and other upload characteristics

- Object Lifecycle Management can be set up to automatically delete or archive objects that meet certain rules, such as:

- Downgrade storage class on objects older than a year

- Delete objects created before a date

- Keep only the 3 most recent versions of an object

- This happens asynchronously, so rules may not be applied immediately, could take 24 hours

- To enable lifecycle management for a bucket from .json file:

gsutil lifecycle set CONFIG_FILE gs://BUCKET_NAME

- Object Versioning can maintain multiple versions of an object, this is great for data recovery when you need to restore objects that were overwritten or deleted. Applied at the bucket level.

- Retention Policies are best for compliance and prevent the deletion/modification of the bucket’s objects for a specified minimum period of time after they’re uploaded

- Directory Synchronization can sync a VM directory with a bucket –

gsutil rsync- Use Cloud Storage FUSE (gcsfuse) to mount Cloud Storage buckets as file systems

- Setting a bucket to allUsers with Reader access makes it publicly accessible

- You can use

gsutil labelcommand to set labels using flags or a JSON filegsutilalso helps with ACLs and moving data

- ACLs can be used instead of Cloud IAM to grant access but aren’t recommended unless you’re moving data from AWS or require fine-grained security

- To use a customer-managed encryption key and encrypt files before moving into GCS, you can supply a .boto file in your

gsutilconfiguration - You can use signed URLs to enable data owners to interact with data, but that does not show who accessed the data and may not satisfy nonrepudiation/auditing/monitoring requirements. They are time-limited links to provide access to resources to anyone in possession of the URL, regardless of whether they have a Google account.

- You can notify on object changes using Pub/Sub

Storage Classes (From Hot to Cold)

- Multi-Regional – Geo-redundant globally available data. Good for data that is frequently accessed (“hot” objects) around the world such as serving website content, streaming videos, or gaming and mobile applications. (99.95%)

- Dual-Region – High availability and low latency across 2 regions (only for certain predefined pairs)

- Regional – Lower latency and stored in a single region. Good for data that needs to be accessed by a Dataproc cluster or GCE VM in the same region

- Nearline – Access less than once/month (backups)

- Coldline – Access less than once/quarter (backups)

- Archive – Access less than once/year

Encryption of VM Disks and Cloud Storage Buckets

Default Encryption

- Data is automatically encrypted prior to being written to the disk

- Each encryption key is itself encrypted with a set of master keys

Customer-Managed Encryption Keys (CMEK)

- Google-generated data encryption key (DEK) will be used

- Allows customers to create, use, and revoke the key encryption key (KEK)

- Here is additional Information about Envelope Encryption if this is confusing

- Keys are still managed with KMS, but customers have more control over life cycle

- List of GCP services with an integration with KMS

Customer-Supplied Encryption Keys (CSEK)

- Allows customers to keep keys on-premises and use them to encrypt your cloud services

- Google can’t recover them! The keys are never stored on the disk unencrypted

- You provide your key at each operation and Google purges it when the operation completes

- Only usable for disk encryption on VMs and Cloud Storage

- The CSEK encrypts the object data, the CRC32C checksum, and the MD5 hash

External Key Manager

- Customer keys can also be managed by a trusted partner using External Key Manager (EKM) service, see for example demo by Thales

- Customer keys are never sent to Google

- List of GCP services with an integration with EKM

Client-Side Encryption

- Data is encrypted before it is sent to the cloud with the customer’s keys and tools

- Google doesn’t even know whether or not the data is encrypted, there is no way to recover the keys, and if you lose your keys, remember to delete the objects because it’s essentially crypto-deleted